How to Test Your Robots.txt File for Optimal SEO

How to Test Robots.txt Files: Ensuring Smooth Crawling and SEO Success

The robots.txt file acts as a silent guide for search engine crawlers like Googlebot, directing them through the vast corridors of your website.

It dictates which content crawlers can access and index, ultimately influencing your website’s search engine optimization (SEO) performance.

A well-configured robots.txt file prevents crawlers from wasting resources on irrelevant content, optimizes crawl efficiency, and ensures your important pages get discovered and indexed by search engines.

However, a single misstep in your robots.txt directives can unintentionally block search engines from crucial content, hindering your website’s visibility.

Therefore, testing your robots.txt file becomes a vital step in your SEO strategy. This comprehensive guide will equip you with the knowledge and tools to effectively test your robots.txt file, ensuring it operates as intended and doesn’t create roadblocks for search engine crawlers.

Demystifying Robots.txt Syntax

Before delving into testing methods, let’s revisit the fundamental structure of a robots.txt file. It’s a text file consisting of directives that specify instructions for different user-agents (typically search engine crawlers) on how to interact with your website’s content.

Here’s a breakdown of the key components:

- User-agent: This line identifies the specific crawler the directive applies to. Common examples include “Googlebot,” “Bingbot,” etc.

- Disallow: This directive instructs the user-agent to not crawl a specific URL path or directory.

- Allow: While less frequently used, the Allow directive can be used to override a previous Disallow directive for a specific URL within a disallowed directory.

- Crawl-delay: This directive (not as commonly used) specifies a delay in seconds that the user-agent should wait between requests to your website. This can be helpful for managing server load caused by crawling activity.

Example Robots.txt File:

User-agent: Googlebot

Disallow: /images/temp/

Disallow: /login/

Crawl-delay: 3

User-agent: Bingbot

Disallow: /admin

# Allow all other user-agents

User-agent: *

Allow: /

Explanation of the Example:

- Googlebot is instructed not to crawl the “/images/temp/” and “/login/” directories.

- Googlebot is also configured with a 3-second crawl delay.

- Bingbot is restricted from accessing the “/admin” directory.

- All other user-agents are allowed to crawl the entire website (/) by default.

Important Note: It’s crucial to remember that robots.txt is a suggestion, not a strict directive. Well-behaved search engines will typically respect your robots.txt instructions. However, malicious bots may disregard them entirely.

Unveiling Testing Techniques: A Multi-faceted Approach

Now that you grasp the robots.txt syntax, let’s explore various methods to test its functionality effectively:

1. Manual Testing with User-Agent String:

This method involves manually testing specific URLs with different user-agent strings to see if they are accessible.

Steps:

- Identify the URL: Choose a URL you want to verify if it’s blocked or allowed for crawling by a specific user-agent.

- Utilize a User-Agent Switcher: Browser extensions or online tools like User-Agent Switcher for Chrome: https://chrome.google.com/webstore/detail/user-agent-switcher-for-c/djflhoibgkdhkhhcedjiklpkjnoahfmg allow you to change your browser’s user-agent string. Set it to the user-agent you want to test (e.g., “Googlebot”).

- Access the URL: Try accessing the chosen URL with the modified user-agent.

- Analyze the Response: If the URL is accessible, it suggests the user-agent is allowed to crawl it. Conversely, if you receive an error message (e.g., 404 Not Found), it indicates the URL might be disallowed in your robots.txt for that specific user-agent.

Drawbacks: This method can be time-consuming for testing multiple URLs and user-agents.

2. Online Robots.txt Testers:>

Several online tools can simplify robots.txt testing. These tools allow you to enter your website’s URL and a specific user-agent string. The tool then analyzes your robots.txt file and predicts whether the user-agent is allowed or disallowed to crawl a particular URL.

Popular Online Robots.txt Testers:

- SEO Site Checkup Robots.txt Test Image of SEO Site Checkup Robots.txt Test: [https://seositecheckup.com/tools]

- Technical SEO – Robots.txt Tester Image of Technical SEO Robots.txt Tester: [https://technicalseo.com/tools/docs/robots-txt/]

- Ryte – Robots.txt validator and testing tool Image of Ryte Robots.txt validator and testing tool: [https://en.ryte.com/free-tools/robots-txt]

- SE Ranking – Robots.txt Tester Image of SE Ranking Robots.txt Tester: [https://seranking.com/free-tools/robots-txt-tester.html]

These online tools allow you to enter your website’s URL and a specific user-agent string. The tool then analyzes your robots.txt file and predicts whether the user-agent is allowed or disallowed to crawl a particular URL.

Advantages of Online Testers:

- Convenience: They offer a quick and easy way to test specific URLs.

- User-Friendly Interface: Most tools provide a clear interface for input and results.

Limitations of Online Testers:

- Limited Functionality: These tools may not always accurately predict crawling behavior due to complex robots.txt configurations or server-side restrictions.

- Inaccurate for Unreleased Robots.txt: They cannot test a robots.txt file that hasn’t been uploaded to your website yet.

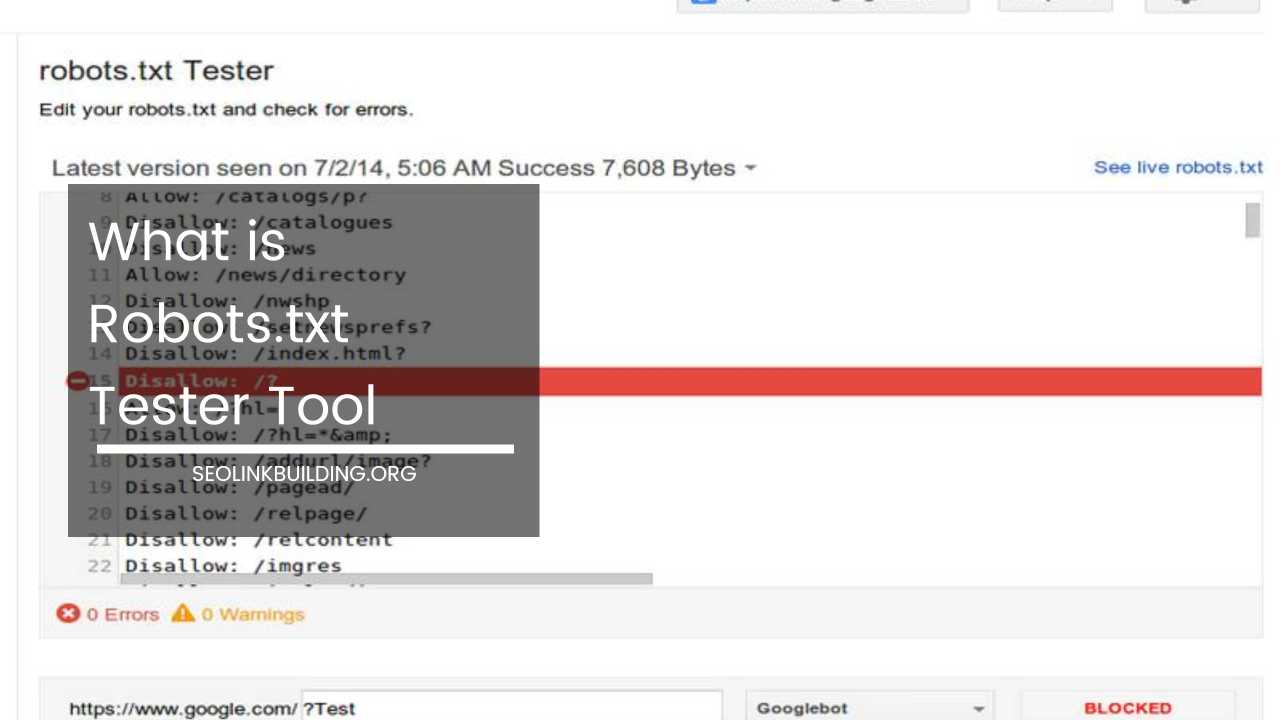

3. Google Search Console: A Powerful Ally

Google Search Console (GSC) offers a robust set of tools for managing your website’s presence in Google search results. It also includes a built-in robots.txt tester that allows you to test how Googlebot sees your website.

Here’s how to leverage GSC for robots.txt testing:

- Access GSC: Sign in to your Google Search Console account and select the website you want to test.

- Navigate to the Crawl Section: Under the “Crawl” section, locate the “robots.txt Tester” tool.

- Input the URL: Enter the specific URL you want to test.

- Select User-Agent: Choose the user-agent you want to simulate (typically “Googlebot”).

- Analyze the Results: GSC will analyze your robots.txt file and tell you if the URL is allowed or disallowed for crawling by the chosen user-agent. Additionally, it will highlight the specific directive in your robots.txt that determines the crawling allowance.

Benefits of Using GSC:

- Accuracy: Since it’s a Google product, it provides the most reliable assessment of how Googlebot interprets your robots.txt directives.

- Detailed Insights: It offers insights into the specific rule that governs the crawling allowance for the tested URL.

Limitations of GSC:

- Limited Testing Scope: While GSC provides valuable information, it’s best suited for testing individual URLs rather than comprehensive robots.txt validation.

4. Advanced Testing with Debugging Tools:

For advanced users and developers, debugging tools like Screaming Frog or SiteBulb can be used for more comprehensive robots.txt testing. These tools can crawl your entire website and simulate search engine behavior, providing detailed reports on how your robots.txt file affects crawling.

Advantages of Debugging Tools:

- Comprehensive Testing: They can crawl your entire website, identifying potential crawling issues beyond specific URLs.

- Detailed Reports: They generate reports that outline how your robots.txt impacts crawling efficiency and identify any potential issues.

Disadvantages of Debugging Tools:

- Technical Expertise Required: These tools require some technical understanding of SEO and website crawling to interpret the reports effectively.

- Cost Factor: Some debugging tools might have paid plans with advanced functionalities.

Choosing the Right Testing Method:

The optimal testing method depends on your specific needs and technical expertise:

- For quick checks on individual URLs: Online robots.txt testers or GSC are suitable options.

- For comprehensive testing and in-depth analysis: Debugging tools like Screaming Frog or SiteBulb offer a more thorough approach.

- For verifying how Googlebot sees your website: GSC is the most reliable option due to its direct connection to Google.

Best Practices for Effective Robots.txt Testing:

- Test Early and Often: Integrate robots.txt testing into your website development and maintenance process.

- Test for Different User-Agents: While Googlebot is the primary concern, consider testing for other common crawlers like Bingbot or Yandexbot.

- Test Unpublished Robots.txt Files: Use online testing tools or code simulators to validate your robots.txt before uploading it to your server.

- Focus on Crawl Efficiency: Don’t just block URLs; use robots.txt strategically to optimize crawl efficiency and avoid overwhelming search engines with unnecessary requests.

By following these strategies and employing the appropriate testing methods, you can ensure your robots.txt file functions as intended, facilitating smooth crawling by search engines and ultimately propelling your website’s SEO success.